2026

MuddyHands

A solo 5-day design sprint exploring how potters document work with muddy hands

MuddyHands is a voice-first documentation tool for potters. Designed and prototyped in a 5-day solo design sprint, the project explores how separating capture from reflection can preserve creative flow while still supporting meaningful learning.

Potters can’t reliably document their making process while working with wet clay. Typing, photographing, or organizing notes interrupts flow and is often physically impossible, causing process insights to be forgotten or lost.

SOLUTION

MuddyHands enables one-tap voice capture during making and defers editing, summarization, and curation to a later reflection phase. By separating capture from sense-making, the app preserves creative flow while still producing meaningful, organized documentation.

PROBLEM SPACE

I defined the problem scope for this app.

What makes a good potter? Practice + reflection. But

So

documentation shouldn’t happen during sense-making - it should happen around it.

POTTER JOURNEY MAP

The user journey map revealed that the core challenge wasn’t organizing information, but respecting when users are cognitively able to do so.

FLOW CHART

The flow chart revealed that effective documentation depends on separating capture from reflection and treating deletion and summarization as core parts of learning.

Day 1, I built deep understanding of the problem and map the end-to-end experience. I reframed documentation as a two-phase cognitive process rather than a single task.

MVP SCOPE

I deliberately scoped MuddyHands to a narrow, behavior-focused MVP. Instead of trying to solve documentation end-to-end, I focused on the moment where most tools fail: recording process without breaking flow.

Included in the MVP

One-tap voice capture during making

Sessions to group work by time

Pieces as the unit of reflection

Review and deletion of raw notes

Explicitly out of scope

Include reference pictures during creating proces

Image capture during making

Social sharing or collaboration

Moving session to trimming/glazing phrases

By cutting these early, I protected the core behavior the product was meant to test.

IA

With the scope locked, I designed the information architecture around cognitive state, not features.

Designing the IA this way surfaced a key insight: Reflection works bottom-up, not top-down.

Users need to understand individual pieces before making sense of a session. The IA enforces this order by design, preventing premature summarization or false completion.

SKETCHING

&

ITERATIONS

With the IA, I started exploring the range of possible experiences.

When sketching the possible flows, I noticed some confusing spots.

Clarifying Actions vs. System States

Problem: During sketching, the flow used the word “complete” in multiple ways—some as user actions and others as system statuses—creating unnecessary cognitive load and ambiguity about what was actually happening.

Solution: I separated actions from system states. “Done” explicitly ends the capture phase, “Active” signals a session is open for reflection or continued recording, and “Completed” represents intentional closure. Reducing overlapping labels clarified the flow and made each stage easier to understand at a glance.

Making Reflection Optional, Not Obligatory

Problem: The initial design required users to review pieces one by one before completing a session, turning reflection into a checklist and making it feel like work—especially when multiple sessions existed.

Solution: I removed mandatory review flows and reframed reflection as optional and self-directed. Users can edit notes, add thoughts, or skip reflection entirely. This shift reduced friction and positioned reflection as an opportunity for learning rather than a task users must finish.

By the end of Day 2, I translated the MVP scope and information architecture into sketches, iterating on clarity by separating actions from system states and removing mandatory review flows to keep reflection flexible and low-pressure.

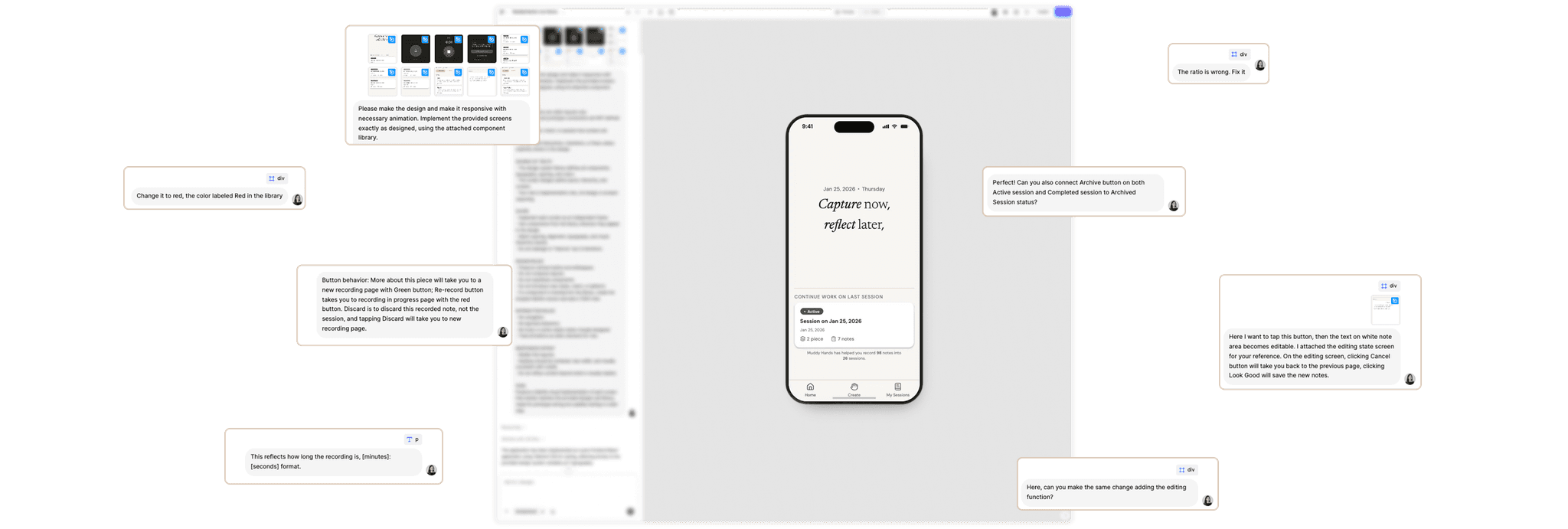

VIBE-CODING

I started Day 3 by using v0 as an exploratory prototyping tool to quickly translate the full information architecture and user flows into a working product. This allowed me to see how capturing and sessions behaved together and validate the end-to-end flow early.

While the prototype functioned well structurally, the UI was generic and not expressive enough for testing subtle distinctions between actions and system states. At this stage, V0 was helpful for validating flow, but insufficient for refining interaction clarity and visual tone.

Here I have three paths to refine the interface

01.

Continue iterating through prompts in v0

02.

Define a design system inside v0

03.

Design the system directly in Figma

While defining a design system within v0 was technically possible, it shifted the design work into text-based negotiation rather than visual decision-making. For a sprint project where speed is critial while subtle hierarchy, spacing, and tone also matters, this introduced unnecessary friction and ambiguity.

Make visual decisions directly rather than describing them

Evaluate hierarchy and rhythm at a glance

Reduce iteration overhead caused by prompt interpretation

DESIGN SYSTEM

I created a small, purpose-built design system in Figma to support consistency across capture and reflection states.

Instead of aiming for completeness, the system focused on the few elements that mattered most: hierarchy, calm spacing, and clear status signaling.

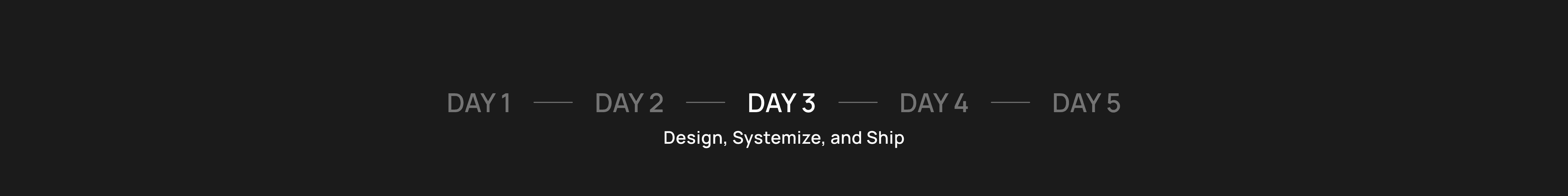

HI-FI WIREFRAME

With the system in place, I designed a set of key frames to define the core experience. These screens captured the most important transitions—between capture, reflection, and closure—and served as visual references for implementation.

Instead of aiming for completeness, the system focused on the few elements that mattered most: hierarchy, calm spacing, and clear status signaling.

MVP DONE

To turn the designs into a testable product, I used Figma Make, treating the design system and key frames as the single source of truth. This allowed me to implement the MVP faithfully without reinterpreting layout or hierarchy.

This approach let me move quickly while preserving the design decisions I needed to test.

By the end of Day 3, I had a published, end-to-end MVP ready to be tested in a real pottery class environment.

CONTEXT

To test the concept in a realistic setting, I brought the MVP to my weekly pottery class and asked classmates to try the app and share their thoughts. Because this was an informal sprint test, the goal was to understand perceived value rather than measure performance metrics.

What I wanted to learn is:

Does organizing notes by session and piece make sense?

Does the concept feel helpful for reflection and learning?

Would potters see value in keeping these records over time?

WHAT USERS SAID

Josie, my classmate

Josie felt the app would make it easier to keep track of pieces and processes across a session. She mentioned that having notes organized by piece would help her reflect later on what worked and what didn’t.

“I usually forget what I did with each piece after class. Having it organized like this would make it much easier to look back and remember.”

Caylynn, my instructor

Caylynn saw potential in the structure for helping potters document their process more intentionally and track improvement over time.

“It would be really satisfying to look back and see all the pieces and sessions—it’s like a record of your progress.”

Testing with real potters validated that organizing notes by session and piece supports reflection and creates a satisfying record of progress.

FINAL VERSION

After testing MuddyHands with real potters, the final day focused on reflecting on what the sprint revealed and preparing the product for presentation. I made several small refinements based on testing and my own observations, fixing minor bugs and polishing interactions to ensure the experience worked smoothly.

What this sprint validated

01.

Separating capture and reflection works well

Potters appreciated the ability to quickly record notes during making and revisit them later when their attention was free.

02.

Organizing work by session and piece feels natural

This structure aligned with how potters already think about their workflow in the studio.

03.

Reflection can be lightweight

Users didn’t need complex review systems; simply editing notes and revisiting pieces was enough to support learning and progress tracking.

What I would explore next

We might

Add photos after trimming or glazing to complement notes

We might

Explore long-term session history to visualize progress over time

We might

Test the app with more potters across different techniques, including handbuilding.

Happy building!